In the field of VR helmets, there is a method of foveal rendering, in which a significant part of the resources is spent on a narrow area of the image, which is looked at by the person and the rest areas are created with much less quality. Developers from Facebook have created an algorithm that effectively restores the quality image in areas drawn with low quality. The article was presented at THE SIGGRAPH Asia 2019 conference.

To comfortably immerse the user in a virtual environment, the VR helmet must have a high resolution, operate at a high frequency (a frequency of 90 hertz and higher is considered a comfortable indicator), and also calculate an image for the entire field of view around the head. This leads to the fact that VR-helmets require a connection to a sufficiently powerful computer for their work, and stand-alone helmets at the current level of technology development significantly lag behind the characteristics of the connected ones.

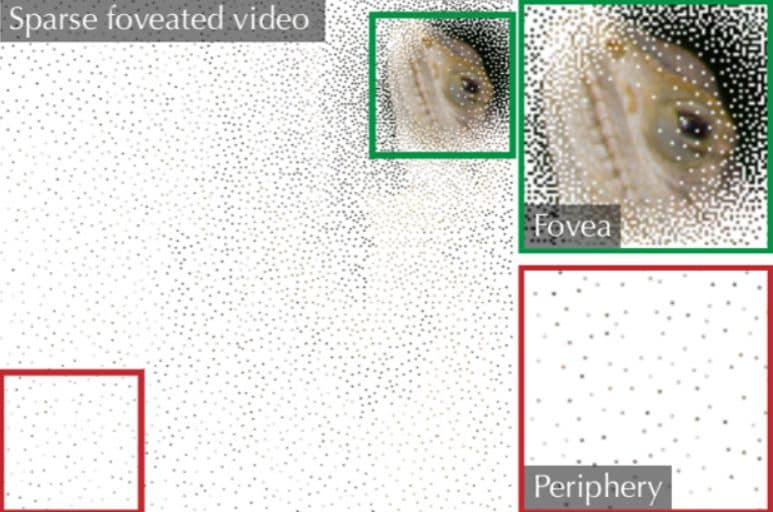

As a solution, engineers have been developing technology for foveal image rendering for VR helmets based on the characteristics of human vision for several years. The fact is that we see a clear only a small area in the centre (foveal zone) of our field of view, and peripheral areas of vision capture much less detail. Accordingly, computing resources can be saved by tracking the direction of view and drawing only the central area with high resolution.

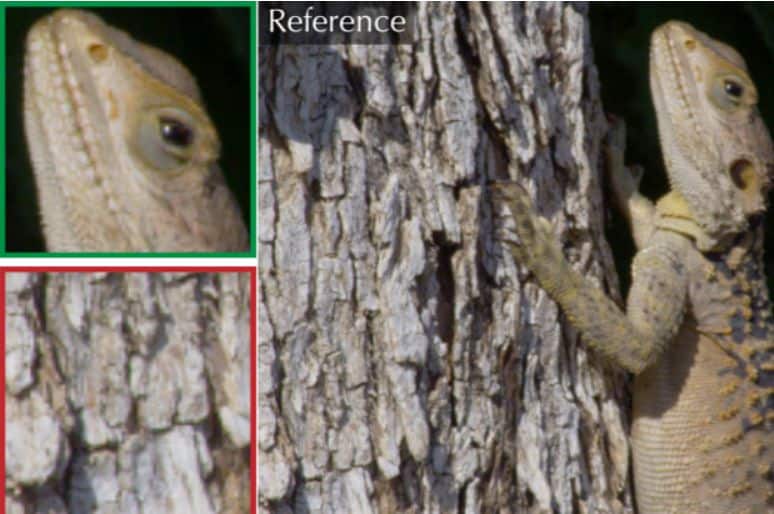

Researchers at Facebook Reality Labs, led by Gizem Rufo, have created a neural network capable of taking images with a clear foveal zone and rare pixels in the peripheral zone, and restore it to a high-quality image that looks like the original image to the average user. The algorithm works on the basis of the U-Net network, which has a decoder structure.

Since the neural network works with video — that is, a sequence of frames that are semantically interconnected — the results of restoring neighbouring frames must be consistent with each other. For this, the developers added recurrence blocks to the algorithm, which use the network state on the current frame to restore the next one.

In addition, the developers used the popular architecture of the generative-competitive neural network for training, in which the result of the generator (the main neural network) is given to the discriminator (the testing network), which tries to determine whether this image is real or created by the algorithm. Thanks to this, both parts are constantly trained and over time the generator significantly improves the quality of its work.

Researchers trained the algorithm on a dataset from various videos, such as humans or animals. The original videos were processed by an algorithm that randomly moved the direction of view and washed almost all the pixels outside the central field of view from the frame. As a result, the developers were able to train the algorithm to recreate frames with fairly high quality. For example, a study on volunteers showed that as the pixels were compressed (increasing the proportion of erased pixels), the visibility of the image artifacts increased, but only when compressed 37 times 50 %.

The developers note that they used a computer with four NVIDIA Tesla V100 graphics cards to operate the neural network. However, the power of these video accelerators is so high that if used for conventional rendering at a frequency of 90 hertz, the authors could probably get much more a better image than when restored by a neural network, so the purpose of the work seems to be entirely exploratory.