Developers from Microsoft Research introduced an algorithm that can animate static frames of faces using unprocessed recordings of people’s speech. The model they created is context-sensitive: it extracts from the audio not only phonetic characteristics, but also emotional tone and external noise, due to which it can superimpose all possible aspects of speech on a static frame.

To animate still images in most cases, the transfer of information from videos to the desired frame is used. In solving this problem, the developers have already achieved significant success: now there are models that can reliably transfer speech from a video sequence to a static frame, recreating the speaker’s facial expressions.

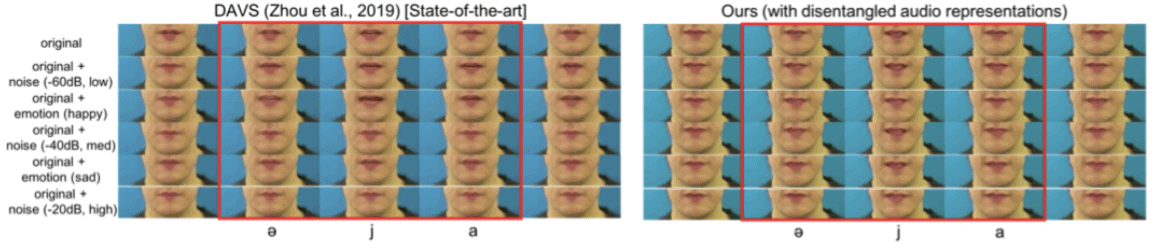

Difficulties in solving, however, may arise if the image needs to be “revived” with the help of an audio series: all existing algorithms that can transfer audio to a static frame so that a natural animation or even video of the speech process is obtained are limited in that they can work only with a clear, clearly audible speech delivered in a neutral voice without emotional color. Human speech, however, is multifaceted and ideally it is necessary to teach such algorithms to recreate all its aspects.

Gaurav Mittal and Baoyuan Wang from Microsoft Research decided to do this. Their algorithm receives an audio file as an input and, using a variational auto-encoder based on neural networks with a long short-term memory, identifies key aspects: the phonetic and emotional component (in total, the algorithm understands six basic emotions), as well as third-party noise. Based on the selected information, the speaker’s facial expressions are reconstructed — video files are used for this — and superimposed on the initially static image.

To learn the algorithm, the researchers used three different datasets: GRID, which consists of thousands of video recordings of 34 people’s speech delivered with a neutral expression, 7.4 thousand video recordings of speeches with different emotional colors taken from the CREMA-D dataset, as well as more than one hundred thousand video clips TED.

As a result, the researchers were able to animate static images even using audio with background noise of up to 40 decibels, and also successfully use the emotional components of the speaker’s speech in the animation. The authors themselves do not give the animations themselves, but give a comparison of the resulting frames with the results of one of the first such algorithms.

The authors of the work also clarified that their algorithm can be used in all existing systems that can animate static images using audio: for this, it will be necessary to replace the processing audio component in third-party algorithms.

Speech, of course, carries a lot of information about the speaker, and not only about emotions and intentions, but also, for example, about the appearance. Recently, American developers taught the algorithm to recreate a person’s approximate appearance by recording his speech: the system accurately conveys the gender, age and race of the speaker.

Via | arXiv