American developers have created a neural network that can recognize the actions of people both by video recording and by radio wave scanning through a wall and other obstacles. The authors achieved this by first converting both types of data into a skeletal model, and then analyzing it with a single action recognition algorithm. The development will be presented at the ICCV 2019 conference.

In the field of computer vision, technologies for recognizing the body posture by video are quite often used. Often these algorithms are used to determine the behavioral parameters of a person or many people at once. For this, the algorithm creates a skeletal model of the body from the source frames, which can be compared with the postures characteristic of a particular type of activity. For example, Indian developers have created a drone that can recognize violence in the crowd, and Russian engineers are developing a device that can recognize the fall or unusual behavior of older people in the house.

Like other computer vision technologies, the algorithms for creating a body model strongly depend on the quality of frames and lighting, and also do not work when the body on the frame is closed by other objects. There are also technologies that use radio wave signals as data, rather than video recording. However, while these technologies have significantly lower accuracy.

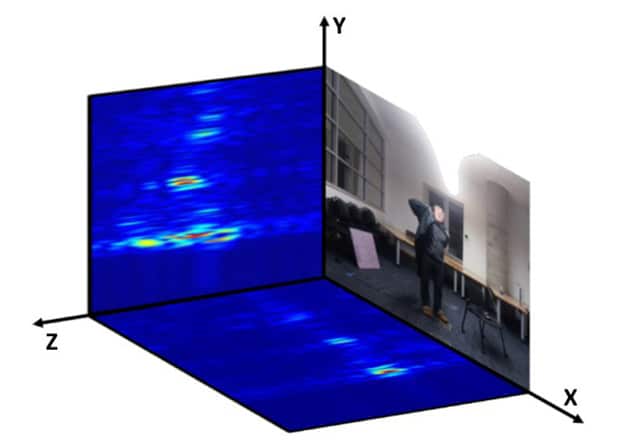

Engineers at the Massachusetts Institute of Technology, led by Dina Katabi, created an algorithm that combines both types of data. It can be represented in the form of three main modules. First, the raw data from the camera or radio transceiver is fed to the corresponding neural network, creating a skeletal model of the body. After that, the following algorithm analyzes the models on the frame, selecting the appropriate actions. He is also able to identify and joint actions, such as a handshake.

To obtain visual data, the developers used a system of several cameras, the open AlphaPose algorithm and an algorithm that turns two-dimensional skeletal models into three-dimensional ones. For radiowave scanning through walls and other obstacles, engineers created a transceiver operating at frequencies from 5.4 to 7.2 gigahertz. It is equipped with two sets of antennas oriented vertically and horizontally. They emit radio waves and then receive reflections from objects. Two-dimensional images are formed from these signals, and then the neural network to create skeletal models receives a pair of such images (for the vertical and horizontal array of antennas).

Tianhong Li et al. / ICCV 2019

The developers trained the neural networks included in the algorithm on several datasets, including their own for creating a model using radio signals, as well as the publicly available PKU-MMD action recognition dataset. Testing the algorithm showed that its accuracy in determining the action when the person is visible is 87.8 percent, and when working through the wall, the accuracy decreases to 83 percent.