Researchers at Facebook AI Research have created an algorithm that automatically changes the person’s face in the video in such a way that it can’t be recognized. The system, based on competitive auto-encoder and face classifier, changes the main facial features of a person in the video, leaving his facial expressions and lighting. The resulting videos look natural, and neither the face recognition system, nor real people can recognize the face in the video.

Automatic face recognition now works quite efficiently and greatly facilitates a person’s life in many areas: for example, when identifying passengers at airports or when using a smartphone. At the same time, of course, there is growing concern that the use of face recognition technologies in general can violate the privacy of users: if at least a few photographs of a person are freely available, then such systems can – even without the knowledge of the people themselves – recognize them.

One of the solutions to this problem is the deception of recognition systems: for this, a modification of the pixels in the frame is used, as well as competitive examples that distract the attention of computer vision and make the algorithms see in the images not what is actually shown on them.

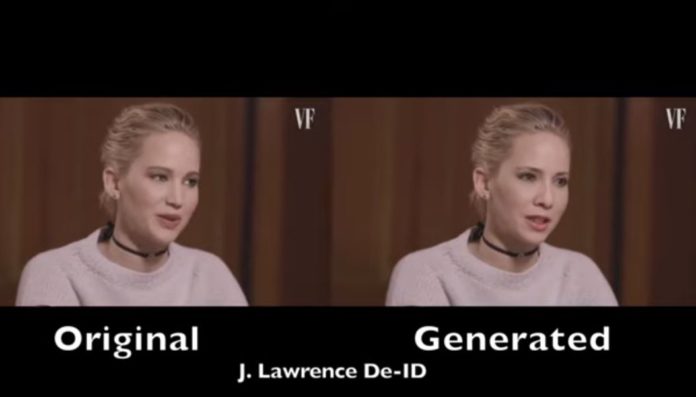

Speaking specifically about the recognition of people, then another option is the substitution of faces in photos and videos. However, it does not always work perfectly, and often people don’t want to be completely unrecognizable or change their identity to another person. Researchers at Facebook AI Research, led by Oran Gafni, have proposed solving this problem in another way – changing the faces of people in a video in such a way that it will be impossible to identify a person.

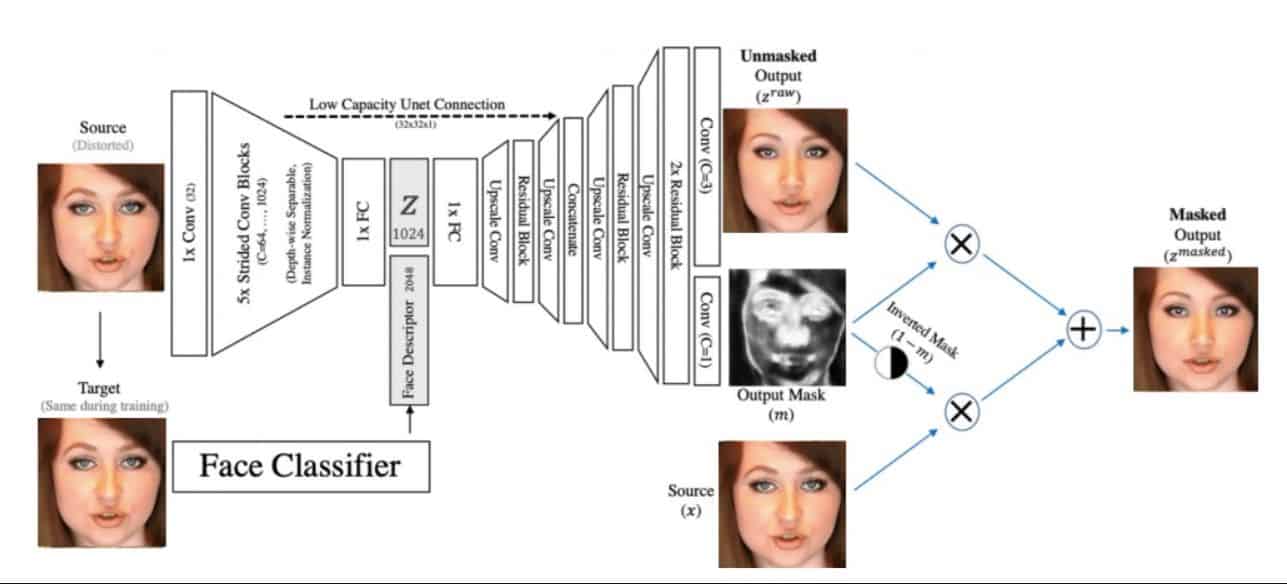

Their algorithm is based on a competitive auto-encoder (a similar method is used in the work of generative-competitive neural networks), in the latent space of which a face classifier based on ResNet-50 with several parameters is used. First, the algorithm changes the person’s face in the video, taking into account the illumination of the image and the shape of the face – a preliminary image of the face is obtained, which will be used as a result. Then a mask removed from the face in the original video is applied to this face, and the final face is formed using a decoder. To learn the algorithm, we used the CelebA-HQ dataset containing video with celebrities.

Thus, the algorithm changes the main features of the person’s face in the video (for example, the shape of the nose, eyebrows or eyes), and all the rest (for example, lighting and facial expressions, as well as speech) remain unchanged: such a substitution can deceive automatic recognition systems, but the image of a person remains similar to the original. In essence, the quality algorithm replaces a person’s face on video with the face of a non-existent person.

In order to test the operation of the algorithm, scientists showed the resulting video to the ArcFace automatic face recognition algorithm, which can recognize more than 50 thousand people. The system coped effectively with the original videos, but couldn’t recognize a specific person in the processed ones – depending on the quality of the received video, ArcFace offered a choice of more than three thousand face options that can be shown on the video.

It also turned out that people cannot identify a person in the video: 20 people who were asked to identify the person in the resulting video completed the task correctly in only 53.6 percent of cases, which is very close to an accidental hit. It is interesting that the algorithm does not need to be pre-trained for specific videos: you can change any person of any person with it.

Even the governments of various countries are concerned about the violation of privacy posed by the use of face recognition technologies. For example, in May of this year, San Francisco authorities banned the monitoring of citizens using such technologies.