Engineers from the UK, South Korea and Japan have created a bracelet that can recognize finger gestures and use them as an input interface. The difference between this prototype and analogues is that it recognizes gestures by the movement of the back of the hand, which allows you to use this method with the camera in a smart watch, even if you can’t see the fingers from its angle.

In serial smart watches, small touch screens and, as a rule, one or two control buttons are installed. Because of this, they are mainly suitable for quickly viewing notifications or simple actions, for example, switching songs. However, more complex tasks, such as typing, on a smart watch are very difficult. As a solution to this problem, engineers suggest using alternative I / O interfaces. For example, several development groups experimented with watches equipped with a projector and sensors, which allows using the surface of the hand as a large touch screen.

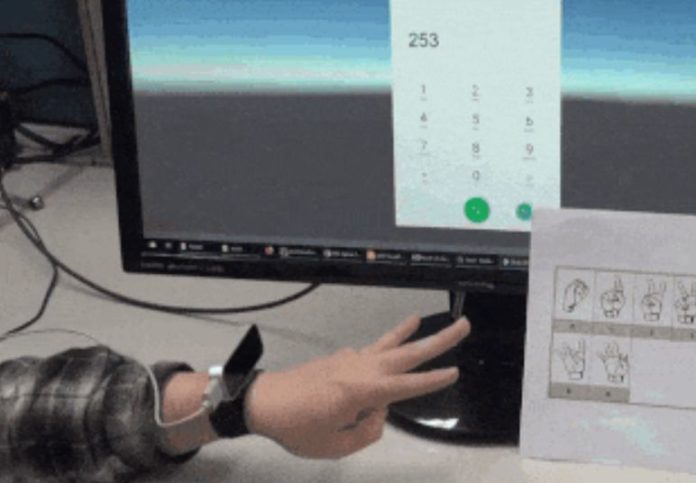

In addition, some serial smart watches have a camera that could be used to recognize hand gestures. However, in practice, such recognition is hindered by the fact that the camera is located close to the surface of the hand and fingers are often not visible on images from it. The developers, led by Hideki Koike from the Tokyo Institute of Technology, offered to immediately teach the system to recognize finger gestures from the image of the back of the hand, on which changes in the shape of the brush, and not the fingers themselves, are visible.

The prototype created by engineers consists of a bracelet and a Leap Motion sensor mounted on them, in which two infrared cameras and three LEDs for illumination are installed. The sensor is mounted in such a way that its scanning plane is perpendicular to the arm. As an algorithm for recognizing gestures, the researchers chose a two-threaded convolutional neural network, in which the original image is fed to one subnet, and the processed one, made up of several previous frames with ten times increased brightness, is fed to the second subnet.

The developers taught the algorithm to recognize ten digits from the American sign language, as well as clicking with five fingers. With the recognition of numbers, the accuracy of the algorithm was 88 percent for the full frame that the fingers hit, and 65 percent for the cropped frame, in which only the outside of the brush was visible. With the recognition of clicks, the accuracy of the algorithm was 67.5 and 45 percent, respectively.