Learn to write in a process that not only consists of copying characters, but also to learn how each of those characters is traced, the space that exists between them, whether to form words or phrases and, of course, their meaning. For centuries, this task has been something exclusive of human beings, but now robots, with the help of artificial intelligence, could be close to equaling us.

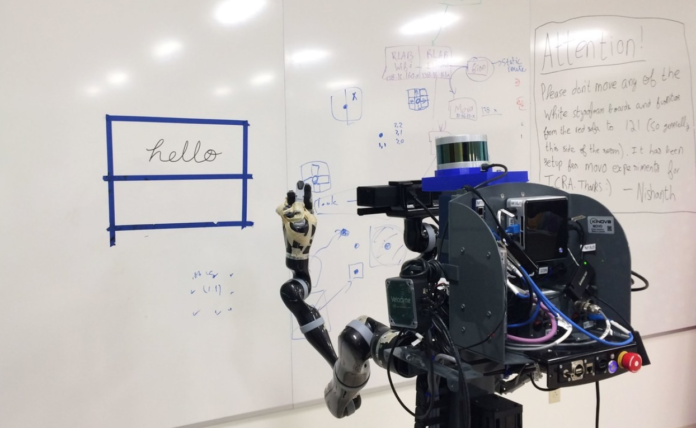

Today, robots are able to imitate or duplicate what we draw or write, but this makes it “raw”, that is, it recognizes the image as a whole and reproduces it line by line, like a printer. Now, thanks to a project from Brown University in Rhode Island, United States, we can see how a robot is able to write and trace like a human being, even in languages he does not know and has never seen.

Can a robot imitate a stroke just by looking at the result?

Atsunobu Kotani, a student at Brown University, developed a machine learning algorithm that uses neural networks whose job is to analyze images of handwritten words or sketches, in order to deduce the succession of strokes that originated them.

Stefanie Tellex, robotics specialist at Brown University, was the one who developed the robotic system to which the algorithm was added to work on its own. The aim of this was to create a robot capable of communicating fluidly with human beings.

Once having the robot and the algorithm, it was trained using a set of Japanese characters. After this, the robot demonstrated that it was able to reproduce such characters using the strokes that created them with approximately 93% accuracy. But this was not surprising, the surprise was when the robot could do the same with Latin characters, even in manuscript and italics. That is, characters that I had never seen and did not know.

The algorithm

The key to this feat is in the algorithm developed by Kotani, which helps the robot decide where and how to place each stroke and its duration , which serves to distinguish each letter of the alphabet, as well as the order in which each letter must be placed or symbol to create the correct word.

The robot is based on two algorithm models that help you write for yourself:

- Global Model : this allows the robot to look at the image as a whole, which helps him decide which is the most likely starting point for the word or letter in particular, as well as the most likely way to move to the next symbol or letter.

- Local Model : this helps the robot to analyze each letter in a specific way, that is, how to make the correct movement and direction until how to finish the line of that character, as well as its placement, size and distance.

Stefanie Tellex pointed out that the robot does not always make the exact and correct strokes when writing the letters, but “it is quite close”. What is really important about this, he points out, is how the algorithm is able to generalize its ability to reproduce strokes.

“A lot of the work that exists today in this area requires that the robot has information about the order of the strokes in advance, if you want the robot to write something, someone has to program the order of the strokes. Atsu, you can draw whatever you want and the robot can reproduce it, it does not always imitate each stroke perfectly, but it’s close enough. “

Writing “hello” in 10 different languages

Once the robot demonstrated its ability, the next thing was to put it to the test in other situations in order to confuse it. This is how they asked 10 people from the ‘Humans to Robots’ laboratory, where Tellex works, that they wrote ‘hello’ in their native languages and using their own lyrics.

With this, they managed to have ‘hello’ written in Greek, Hindi, Urdu, Chinese, Yiddish and other languages, and the robot was able to reproduce them all with outstanding accuracy , which made it difficult for other people to determine what was done. by the robot and what was the human’s, they explain.

The next thing was to ask a group of 6-year-old children to also write ‘hello’, each with a “broken” letter that was sometimes difficult to understand. According to those responsible for the robot, he was able to copy the handwriting of the children with apparent ease.

The final test: a sketch of the Mona Lisa

The final proof that showed the capabilities of this robot, which certainly does not have a name, was when Tellex drew a small sketch of the Mona Lisa, using basic and personal strokes, and then let the robot look at it and imitate it.

According to Tellex, the robot copied the sketch quite faithfully. Kotani related it in the following way:

“When I got back to the lab, everyone was standing around the board, looking at the Mona Lisa and wondering if [the robot] had drawn that.” They could not believe it.

For the development team, that was the key moment in which the robot showed that it was beyond the mere impression since the robot was able to create an image with traces similar to those of a human being.

Tellex says that the image of the Mona Lisa was made in August and today they keep it on the board as a sample of the capabilities of the robot.

“What makes this work unique is the robot’s ability to learn from scratch.”

The team responsible for the robot hopes that the ideas collected through their research can be used to build robots capable of leaving notes or making dictations and sketches, all with the aim of being able to communicate with humans or that can serve as new communication tools. It could well be a step towards a new form of communication between humans and machines.